Apache Spark has become an essential part of operations of big technology firms, like Yahoo, Facebook, Amazon and eBay. This is mainly owing to the lightning speed offered by Apache Spark – it is the speediest engine for big data activities. The reason behind this speed: Rather than a disk, it operates on memory (RAM). Hence, data processing in Spark is even faster than in Hadoop.

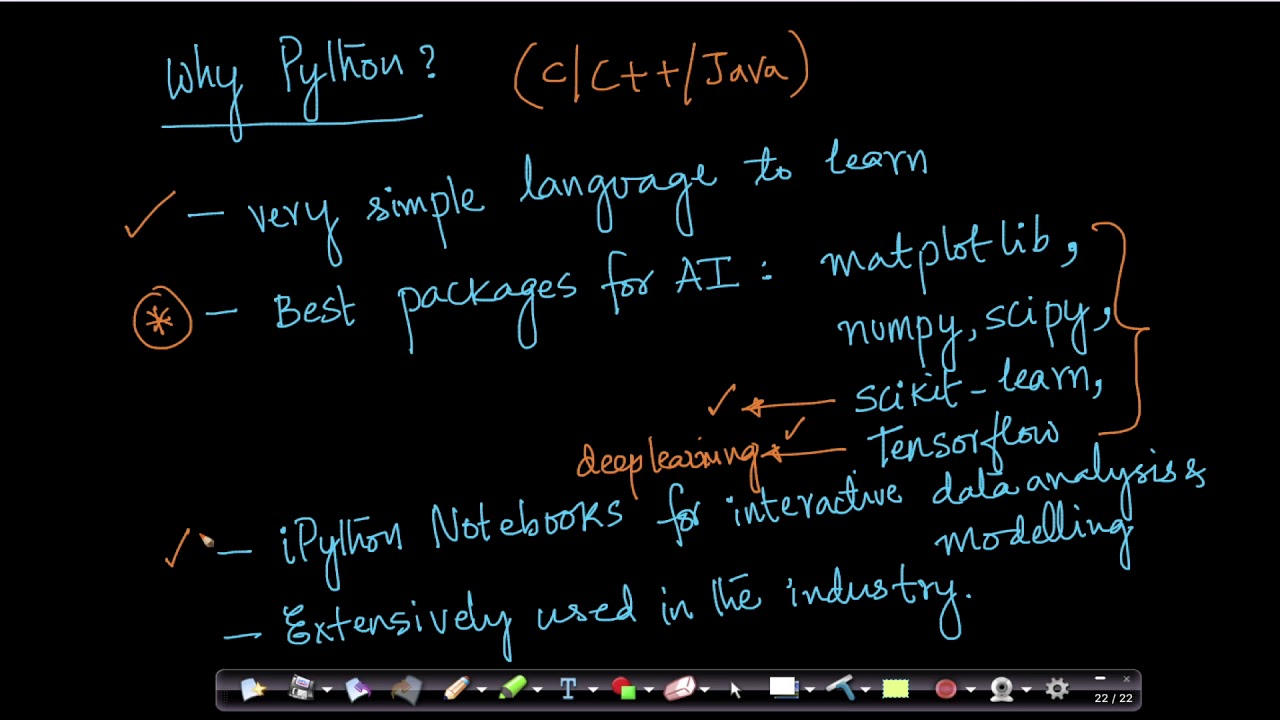

The main purpose of Apache Spark is offering an integrated platform for big data processes. It also offers robust APIs in Python, Java, R and Scala. Additionally, integration with Hadoop ecosystem is very convenient.

Why Apache Spark for ML applications?

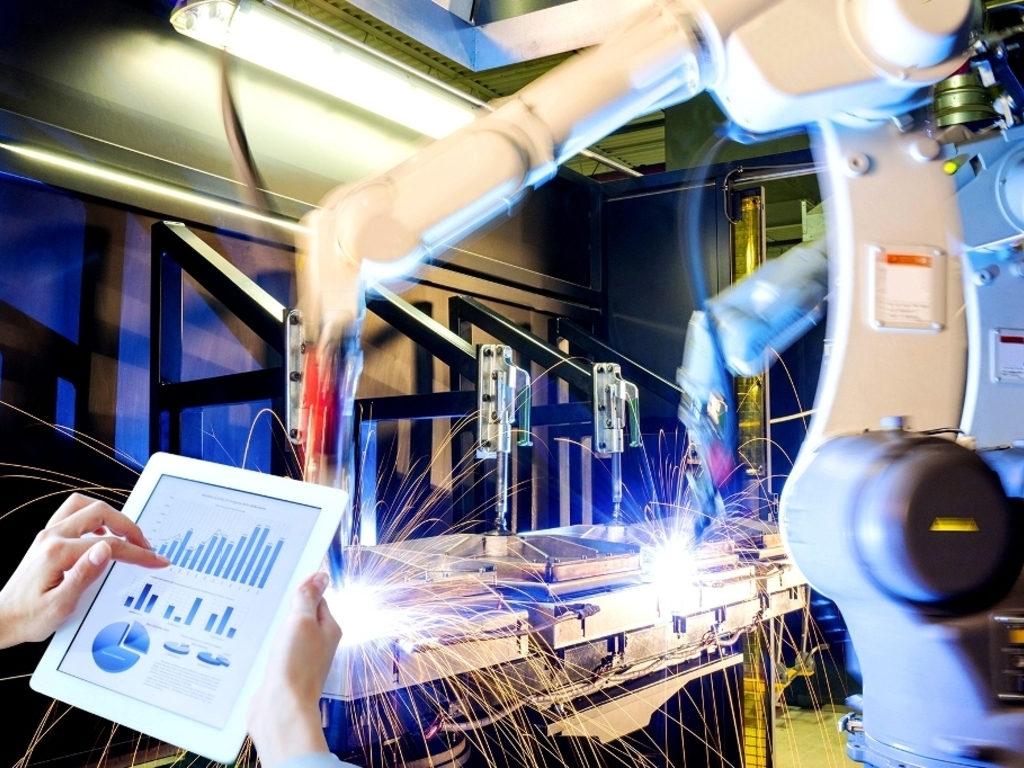

Many machine learning processes involve heavy computation. Distributing such processes through Apache Spark is the fastest, simplest and most efficient approach. For the needs of industrial applications, a powerful engine capable of processing data in real time, performing in batch mode and in-memory processing is vital. With Apache Spark, real-time streaming, graph processing, interactive processing and batch processing are possible through a speedy and simple interface. This is why Spark is so popular in ML applications.

Apache Spark Use Cases:

Below are some noteworthy applications of Apache Spark engine across different fields:

Entertainment: In the gaming industry, Apache Spark is used to discover patterns from the firehose of real-time gaming information and come up with swift responses in no time. Jobs like targeted advertising, player retention and auto-adjustment of complexity levels can be deployed to Spark engine.

E-commerce: In the ecommerce sector, providing recommendations in tandem with fresh trends and demands is crucial. This can be achieved because real-time data is relayed to streaming clustering algorithms such as k-means, the results from which are further merged with various unstructured data sources, like customer feedback. ML algorithms with the aid of Apache Spark process the immeasurable chunk of interactions happening between users and an e-com platform, which are expressed via complex graphs.

Finance: In finance, Apache Spark is very helpful in detecting fraud or intrusion and for authentication. When used with ML, it can study business expenses of individuals and frame suggestions the bank must give to expose customers to new products and avenues. Moreover, financial problems are indentified fast and accurately. PayPal incorporates ML techniques like neural networks to spot unethical or fraud transactions.

Healthcare: Apache Spark is used to analyze medical history of patients and determine who is prone to which ailment in future. Moreover, to bring down processing time, Spark is applied in genomic data sequencing too.

Media: Several websites use Apache Spark together with MongoDB for better video recommendations to users, which is generated from their historical data.

ML and Apache Spark:

Many enterprises have been working with Apache Spark and ML algorithms for improved results. Yahoo, for example, uses Apache Spark along with ML algorithms to collect innovative topics than can enhance user interest. If only ML is used for this purpose, over 20, 000 lines of code in C or C++ will be needed, but with Apache Spark, the programming code is snipped at 150 lines! Another example is Netflix where Apache Spark is used for real-time streaming, providing better video recommendations to users. Streaming technology is dependent on event data, and Apache Spark ML facilities greatly improve the efficiency of video recommendations.

Spark has a separate library labelled MLib for machine learning, which includes algorithms for classification, collaborative filtering, clustering, dimensionality reduction, etc. Classification is basically sorting things into relevant categories. For example in mails, classification is done on the basis of inbox, draft, sent and so on. Many websites suggest products to users depending on their past purchases – this is collaborative filtering. Other applications offered by Apache Spark Mlib are sentiment analysis and customer segmentation.

Conclusion:

Apache Spark is a highly powerful API for machine learning applications. Its aim is wide-scale popularity of big data processing and making machine learning practical and approachable. Challenging tasks like processing massive volumes of data, both real-time and archived, are simplified through Apache Spark. Any kind of streaming and predictive analytics solution benefits hugely from its use.

If this article has piqued your interest in Apache Spark, take the next step right away and join Apache Spark training in Delhi. DexLab Analytics offers one the best Apache Spark certification in Gurgaon – experienced industry professionals train you dedicatedly, so you master this leading technology and make remarkable progress in your line of work.

Interested in a career in Data Analyst?

To learn more about Data Analyst with Advanced excel course – Enrol Now.

To learn more about Data Analyst with R Course – Enrol Now.

To learn more about Big Data Course – Enrol Now.To learn more about Machine Learning Using Python and Spark – Enrol Now.

To learn more about Data Analyst with SAS Course – Enrol Now.

To learn more about Data Analyst with Apache Spark Course – Enrol Now.

To learn more about Data Analyst with Market Risk Analytics and Modelling Course – Enrol Now.