Two decades ago, if someone asked me to write a computer program that played tic-tac-toe, I would have failed horribly. Now being an accomplished computer programmer, I know the desirable tricks to solve tic-tac-toe with the help of “Minimax Algorithm”, and what it takes is just about an hour to jot down the program. No doubt, my coding skills have evolved over the period of time, but also computer science technology has reached unattainable heights.

Computers paved the ways for a startled innovation. When in 1997, IBM introduced a chess-playing computer, known as Deep Blue, which eventually beat world-renowned Grandmaster Garry Kasparov in a six-game match, people remained in awe for years. Following the trend, in 2016, Google’s London-based AI Company, DeepMind launched AlphaGo – and it mastered over the ancient board game Go. Computers have outplayed the best human players in the games of chess, draughts and backgammon, now it’s time for Go.

Also read: Infographic: How Big Data Analytics Can Help To Boost Company Sales?

The technology goes on thriving, beating humans at games. In late May, AlphaGo is all set to take on its human rival Ke Jie, the best player in the world during the Future of Go Summit in Wuzhen, China. Games, which solely relied on human intelligence, wit, intuition, discern is now excelled by the AI, which is powered by improved engineering and computer superiority.

Also read: Top Databases of 2017 to Watch Out For

Don’t you think this is great! Where AI is driving our cars, looking for ways to cure deadly cancer and helping us in everyday work, winning at Go takes AI a step ahead. It not only makes the games more fun and exciting, but endlessly enjoyable.

The strategy explained

In the eastern part of the world, notably in China, Japan and South Korea, Go is extremely popular and many celebrities indulge in it. The game developers showed interest for long in the complexity of this game. However, the rules are simple – the main objective is to secure the maximum territories by placing and capturing black and white stones on a 19×19 grid.

Also read: Shadowing a Data Architect for a Day!

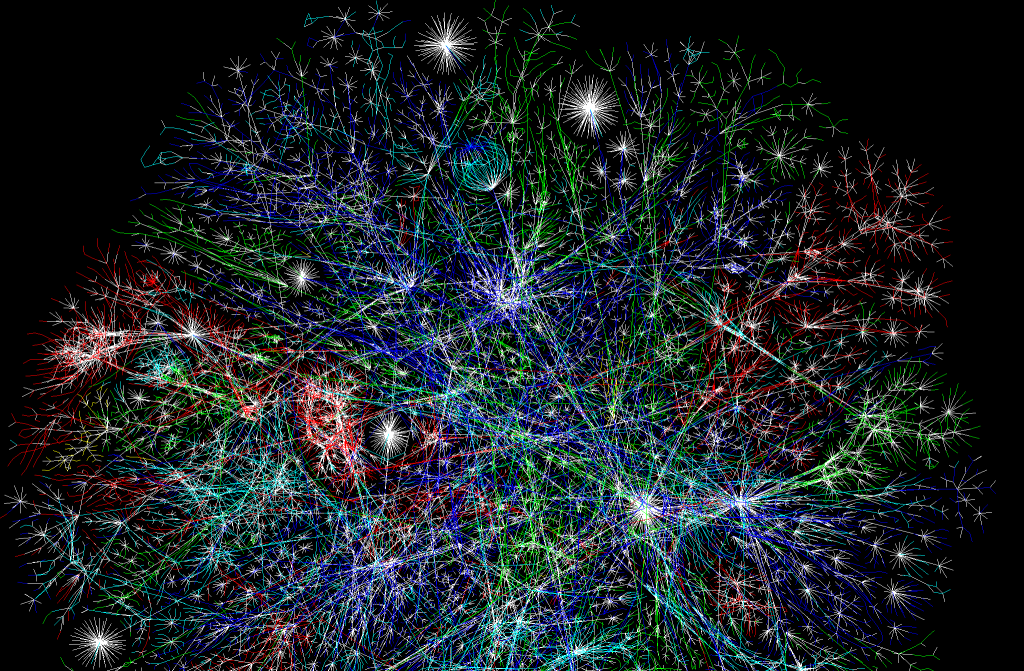

Chess is less complicated than Go; in the latter, the chances of recognising wins and losses is relatively tougher, as stones possess equal values, and ensures understated impacts throughout the board. To play Go, AlphaGo program implemented deep learning in neural networks – a brain-stimulated program. The connections formed here runs in-between layers of simulated neurons, further strengthened by examples and experiences. Firstly, it analysed 30 million positions from expert games, while gaining abstract information about the state of play from the board data, just like other programmes that classify images from pixels. After all this, finally it played against itself over 50 computers to improve its performance, with each iteration and this came to be known as reinforcement learning.

The round of applause

“AlphaGo plays in a human way”, says Fan – DeepMind’s program AlphaGo beat Fan Hui, the European Go champion. He further added, “If no one told me, maybe I would think the player was a little strange, but a very strong player, a real person.” “The program seems to have developed a conservative (rather than aggressive) style”, adds Toby Manning, a veteran Go player and a referee.

You can now get a superior quality Data Science Certification from the experts in Delhi and Gurgaon. Tune into DexLab Analytics for regular updates on business analytics certification.

Interested in a career in Data Analyst?

To learn more about Data Analyst with Advanced excel course – Enrol Now.

To learn more about Data Analyst with R Course – Enrol Now.

To learn more about Big Data Course – Enrol Now.To learn more about Machine Learning Using Python and Spark – Enrol Now.

To learn more about Data Analyst with SAS Course – Enrol Now.

To learn more about Data Analyst with Apache Spark Course – Enrol Now.

To learn more about Data Analyst with Market Risk Analytics and Modelling Course – Enrol Now.