Apache Spark 2.4 has joined the data bandwagon recently – and it is incredible. It brings experimental support for Scala 2.12. Join us as we dig into the features of the latest Spark version – what else it has to offer to our big data developers – apart from a brand new barrier execution mode supporting Databricks Runtime5.0!

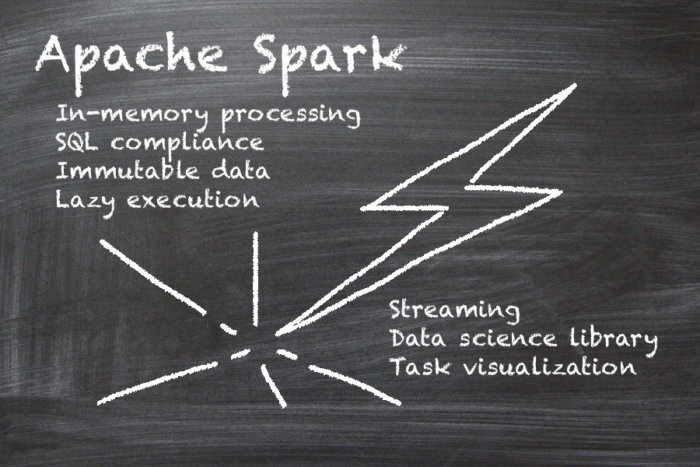

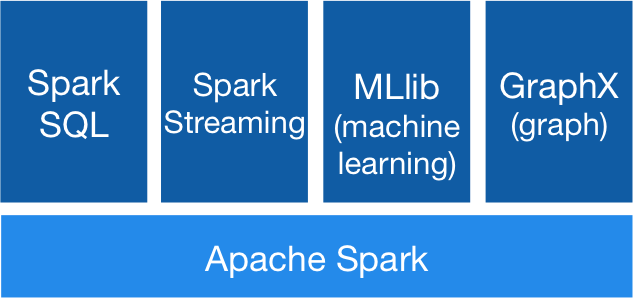

Of late, as we were all busy tapping IoT revolution and latest discoveries in the domain of AI, Apache Spark rolled out a new array of exciting goodies in terms tech features to enhance the data experience for data scientists and developers. The power package is Apache Spark 2.4 – it boasts of a dozen improved features and upgrades that tackle large-scale data processing in a jiffy. Known to all, Apache Spark is a powerful analytics engine that is designed to deal with humongous volumes of data with speed and efficiency. Under the Apache Software umbrella, Spark is one of the most successful projects and the most active open source big data programs.

The latest Spark version is a combination of its erstwhile goals, such as ease of use, efficiency and speed, along with stability and refinement. On a positive note, Project Hydrogen is finally panning out as expected. Designed to ensure better coordination between big data and AI, deep learning frameworks work well. The barrier mode bolsters up better integration with distributed deep learning architecture. The present architecture of Spark is a bit intricate because elaborate communication patterns result in frequent snags and blockages.

However, thanks to the latest barrier execution mode, Spark can seamlessly initiate training tasks like MPI tasks and promptly restart everything when task failures occur. Also, this Spark has introduced a new process of fault tolerance for barrier tasks – whenever barrier task breaks down, Spark mindfully aborts all tasks and initiates the stage.

In addition, Spark 2.4 also comes with built-in advanced functions such as map and array. The latest high-in-order functions permit developers to tackle challenging types directly. Also, these much-improved functions have the ability to manipulate highly advanced values with an anonymous lambda function.

The new Spark offers experimental support for Scala 2.12- owing to this, the developers can now write entire Spark applications with Scala 2.12 just focusing on the 2.12 reliability. It is also equipped with improved interoperability with Java 8 resulting in better serialization of lambda functions.

This latest Spark variant also features built-in support for Apache Avro, the widely recognized data serialization format. As a result, today, the developers can write and read their Avro data within Spark itself. It first started off as a Databricks Project and today it boasts of a host of new functions and superb logical support.

Moreover, Apache Spark 2.4 highlights refined Kubernetes integration in 3 particular ways, and they are as follows:

- Aids running containerized PySpark and SparkR on Kubernetes,

- Client Mode is on offer,

- A higher number of mounting options is made available for increasing Kubernetes volumes.

Besides, other improvements to be noted are:

- Pandas UDF upgrades,

- Prompt ascertainment of DataFrames in notebooks,

- Elimination of 2GB-block size limitation.

Additionally, the new release supports Databricks Runtime 5.0.

Want to know more? Check out our Apache Spark training courses in Delhi. They are well curated and student-friendly. DexLab Analytics is not only touted for its best Scala training Delhi but also our Spark training courses are highly advanced and industry-relevant.

The blog has been sourced from ― jaxenter.com/apache-spark-2-4-overview-151623.html

Interested in a career in Data Analyst?

To learn more about Data Analyst with Advanced excel course – Enrol Now.

To learn more about Data Analyst with R Course – Enrol Now.

To learn more about Big Data Course – Enrol Now.To learn more about Machine Learning Using Python and Spark – Enrol Now.

To learn more about Data Analyst with SAS Course – Enrol Now.

To learn more about Data Analyst with Apache Spark Course – Enrol Now.

To learn more about Data Analyst with Market Risk Analytics and Modelling Course – Enrol Now.