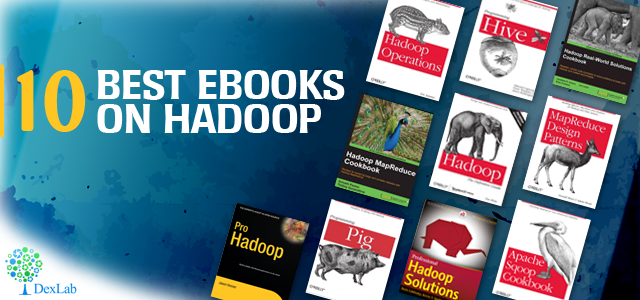

Based on Java, Hadoop is a free open source framework for programming where dealings with huge amounts of processed data in a computing environment is said to be distributed. None other than the Apache Software Foundation is sponsoring it. If you are looking for information about Hadoop, you will like to get in-depth information about the framework and its associated functions. To get you up to the mark with the concepts, the eBooks listed below will prove to be of invaluable help.

MapReduce

If you are looking forward to get started with Hadoop, and maximize your knowledge about Hadoop clusters, this book is of right fit. The book is loaded with information on how t o effectively use the framework to scale apps of the tools provided by Hadoop. This ebook lets you get acquainted with the intricacies of Hadoop with instructions provided on a step-by-step basis and guides you from being a Hadoop newbie to efficiently run and tackle complex Hadoop apps across a large number of machine clusters.

Programming Pig

If you are looking for a reference from which you may learn more about Apache Pig, which happens to be the engine powering executions of parallel flows of data on the Hadoop framework which also is open source, the Programming Pig is meant for you. Not only does it serve the interests of new users but also provides advanced users coverage on the most important functions like the “Pig Latin” scripting language, the “Grunt” shell and the functions defined by users for extending Pig even further. After reading this book, analyzing terabytes of data is a far less tedious task.

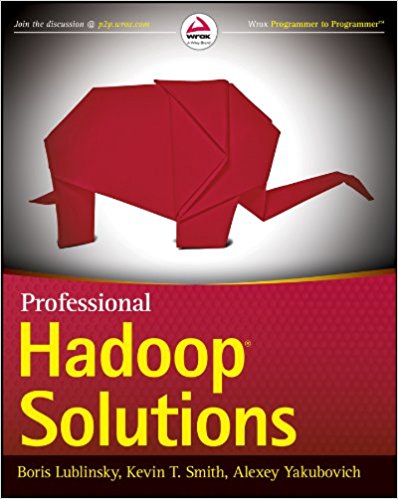

Professional Hadoop Solutions

This book covers a gamut of topics such as that how to store data with Hbase and HDFS, processing the data with the help of MapReduce and data processing automation with Oozie. Not limiting to that the book further covers the security features of Hadoop, how it goes along with Amazon Web Services, the best related practices and how to automate in real time the Hadoop processes. It provides code examples in XML and Java and refers to them in-depth along with what has been added to the Hadoop ecosystem of late. The eBook positions itself as comprehensive resource with API coverage and exposition of the deeper intricacies, which allow developers and architects to better customize and leverage them.

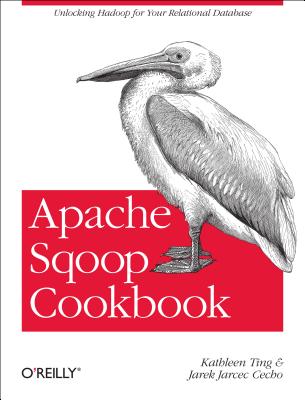

Apache Sqoop cookbook

This guide allows the user to use Sqoop from Apache with emphasis on application of parameters that are enabled by the Command Line Interface when dealing with cases that are used commonly. The authors offer Oracle, MySQL as well as PostgreSQL examples of databases on GitHub that lend themselves to be easily adapted for Netezza, SQL Server, Teradata etc relational systems.

Hadoop MapReduce Cookbook

The preface of the book claims that the book enables readers to know how to process complex and large datasets. The book starts simple but still gives detailed knowledge about Hadoop. Further, the book claims to be a simple guide on getting things done in one place. It consists of 90 recipes that are offered simply and in a straightforward manner, coupled with systematic instructions and examples from the real world.

Hadoop: The Definitive Guide, 2nd Ed

If you want to know how to maintain and build distributed systems that are both scalable and reliable within the framework of Hadoop then this book is for you. It is intended for – programmers who want to analyze datasets, irrespective of size; and – administrators, who seek to know the setting up and running of Hadoop Clusters, alike. New features like Sqoop, Hive as well as Avro are dealt with in the new second edition. Case studies are also included that may help you out with specific problems.

MapReduce Design Pattern

If one is to go by the book’s preface, the book is a blend of familiarity and uniqueness. The book is dedicated to design patterns by which we refer to the general guides or templates for solving problems. It is however more open-ended in nature than a “cookbook” as problems are not specified. You have to delve more in the subject matter than mere copying and pasting, but a pattern will get you covered about 90% of the whole way regardless of the challenge at hand.

Hadoop Operations

This book is necessary for those who seek to maintain complex and large clusters of Hadoop. Map Reduce, HDFS, Hadoop Cluster Planning. Hadoop Installation as well as Configuration, Authorization and authentication, Identity, Maintenance of clusters and management of resources are all dealt in it.

Programming Hive

Knowledge on programming in Hive provides an SQL dialect in order to query data, which is stored in HDFS, which makes it an indispensable tool at the hands of Hadoop experts. It also works to integrate with other file systems, which may be associated with Hadoop. Examples of such file systems may be MapR-FS and the S3 from Amazon as well as Cassandra and HBase.

Hadoop Real World Solutions CookBook

The preface of this eBook illustrates its use. It lets developers get acquainted and become proficient at problem solving in the Hadoop space. The reader will also get acquainted with varied tools related to Hadoop and the best practices to be followed while implementing them. The tools included in this cookbook are inclusive of Pig, Hive, MapReduce, Giraph, Mahout, Accumulo, HDFS, Ganglia and Redis. This book intends to teach readers what they need to know to apply Hadoop knowledge to solve their own set of problems.

So, happy reading!

Besides, feeding knowledge through eBooks, it is vital to be enrolled for an excellent Big data hadoop certification in Gurgaon. DexLab Analytics is here for you; it offers a gamut of high-end big data hadoop training in Delhi, courses that will surely hone your data skills.

Interested in a career in Data Analyst?

To learn more about Data Analyst with Advanced excel course – Enrol Now.

To learn more about Data Analyst with R Course – Enrol Now.

To learn more about Big Data Course – Enrol Now.

To learn more about Machine Learning Using Python and Spark – Enrol Now.

To learn more about Data Analyst with SAS Course – Enrol Now.

To learn more about Data Analyst with Apache Spark Course – Enrol Now.

To learn more about Data Analyst with Market Risk Analytics and Modelling Course – Enrol Now.