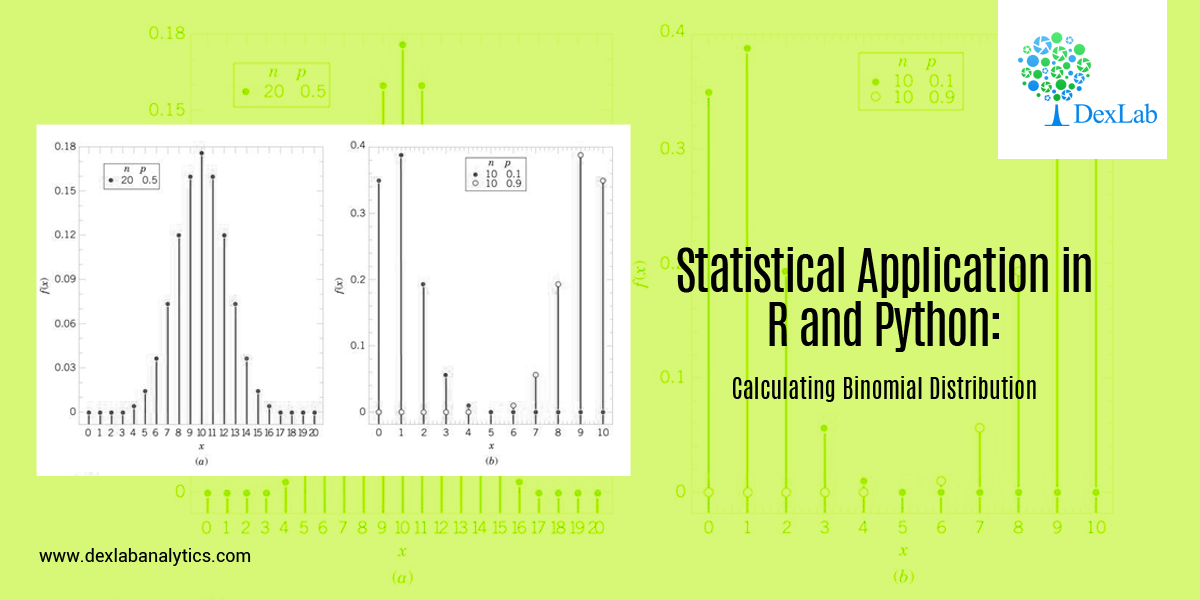

In this blog, we will take a look at the Binomial distribution. This blog is among the series of blogs through which you’ll have a vivid idea of the Statistical Application using R and Python. Statistical Application In R & Python: Chapter 1 – Measure Of Central Tendency is the first of such blogs.

The binomial distribution is an extension of the Bernoulli distribution. In Bernoulli, we have only one parameter, i.e. the probability of success.

Now, consider a case where we have “n” number of trials and we want to predict the probability of success from it. This is the Binomial case.

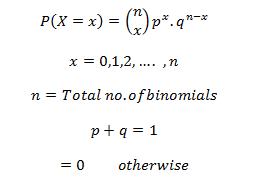

Binomial distribution has two parameters, i.e.: number of trails (n) AND probability of success (p). The mean of the binomial is a product of its two parameters, i.e. n multiplied by p. It is a discrete probability distribution. Here, each trial is assumed to have only two outcomes, either success or failure.

If X be a discrete random variable (taking only non-negative values), it is said to be following binomial distributions with a probability mass function as:-

Application:

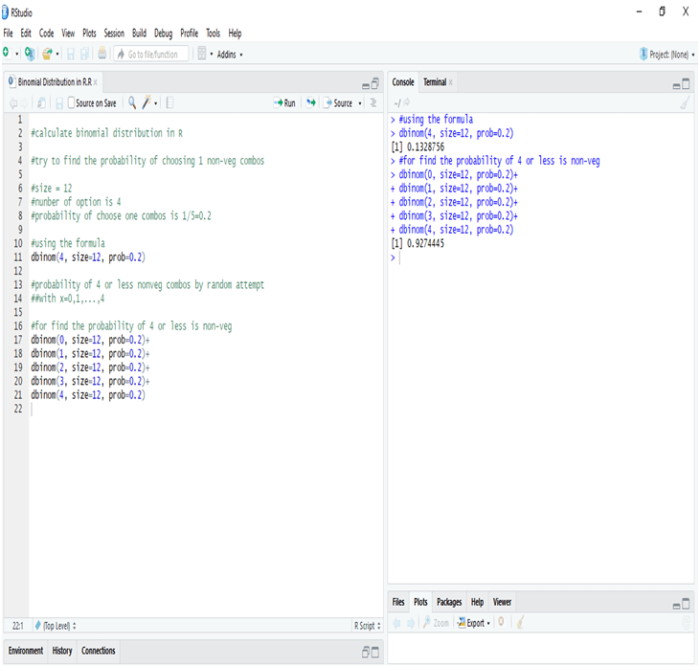

A food shop starts a offer for a festive season, They have 12 different baskets, each basket has 5 combos and only 1 of them is non-veg. Find the probability of having 4 or less non-veg combos, if a consumer tries every combos at random.

Since, only 1 out of 5 combos is non-veg, the probability of choose a non-veg combos by random is 1/5 = 0.2

Calculate Binomial Distribution in R:

In R the probability of one non-veg combos choose by random in 5 is 13.28%, whereas the probability of four or less combos choose by random in a twelve baskets is 92.44%

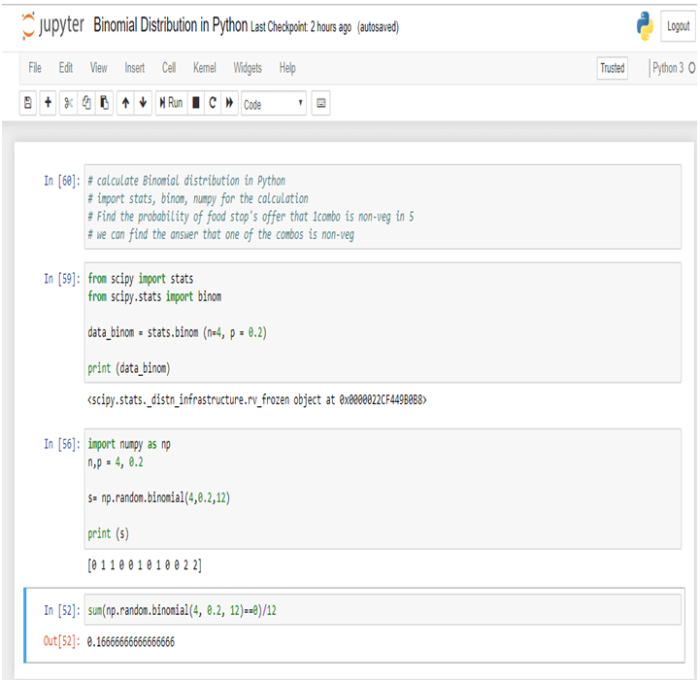

Calculate Binomial Distribution in Python:

In Python the probability of one non-veg combos choose by random in 5 is 16.66%.

Conclusion:-

Binomial Distribution is the process by which we can calculate the probability of success from “n” number of trails. In Binomial Distribution we can find only two outcomes like “Yes” or “No”.

Dexlab Analytics is a pioneering institute of Data Science, with peerless trainers to help you ease your journey with Python Certification, R Programming Certification and Big Data Certification along with numerous other advanced and/or career oriented courses in Computer Science.

Interested in a career in Data Analyst?

To learn more about Data Analyst with Advanced excel course – Enrol Now.

To learn more about Data Analyst with R Course – Enrol Now.

To learn more about Big Data Course – Enrol Now.To learn more about Machine Learning Using Python and Spark – Enrol Now.

To learn more about Data Analyst with SAS Course – Enrol Now.

To learn more about Data Analyst with Apache Spark Course – Enrol Now.

To learn more about Data Analyst with Market Risk Analytics and Modelling Course – Enrol Now.