Technology bigwigs, such as Facebook, eBay, Amazon and Yahoo are vouching for Apache Spark for its services. Why? Because, Apache Spark is reckoned to be the fastest engine for processing big data technology. Instead of a disk, Spark runs on RAM – thus is ideal for faster data processing. It offers rich API’s in Python, Scala, Java and R and is more efficient than Big Data Hadoop. The main purpose of Spark is to formulate a unified platform for big data applications so that it can easily be integrated with Hadoop ecosystem later.

Apache Spark: The Purpose

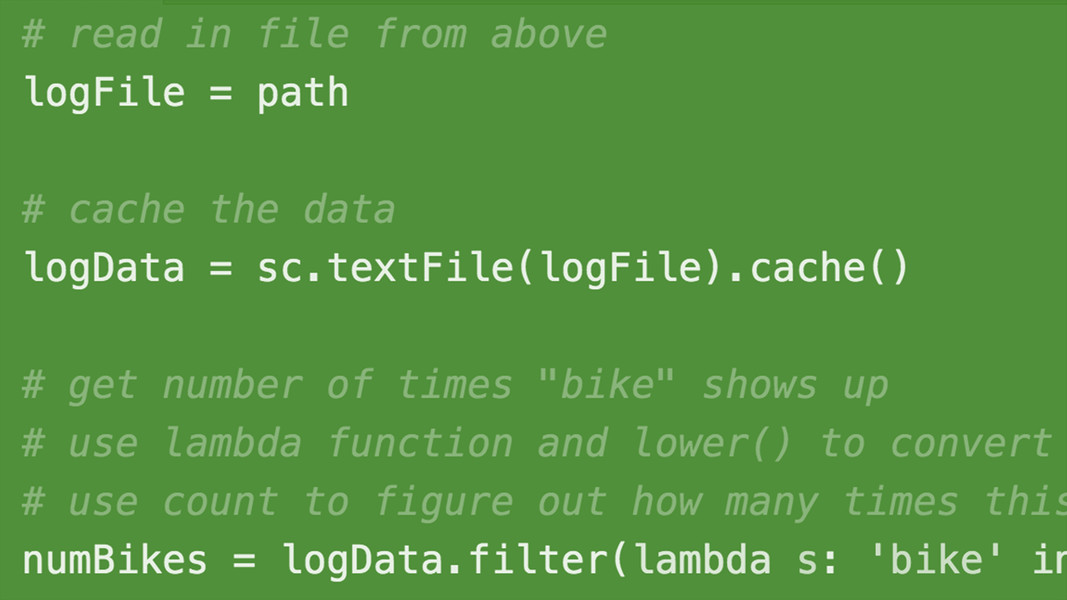

A raft of processes in machine learning undergoes heavy computation. Tackling these processes through Apache Spark is the best way and of course the easiest too. In a competitive industry, a pressing need always exist for an engine capable enough to process data in real time, perform in-memory processing and execute in batch mode. Apache Spark provides all this and more! Real-time streaming, in-memory processing, interactive processing, batch processing, graph processing, all powered with a fast, simple and effective interface is the USP of Apache Spark.

Practical Applications:

Entertainment

Spark is largely used in the gaming industry with an aim to identify patterns real-time and react to them without losing time. Targeted advertising, player retention and auto-adjustment of complexity in the game are few deployed tasks.

E-commerce

Real-time transaction information can be used to improve recommendation system and set new trends and demands. Unstructured data sources are useful; they include feedback from customers. Machine Learning algorithms process millions of such interactions performed by the users within an e-commerce platform – through Apache Spark.

Finance and Security

Apache Spark is ideal for fraud and intrusion detection. Across the finance and security sector, Spark coupled with Machine Learning algorithms evaluates business spending and offers necessary tools to suggest banks how to control finances – helps in finding problems within the financial industry quick and in an effective way. For example, PayPal relies on ML techniques – deep learning and neural network technologies are used the most.

Healthcare

The healthcare industry uses Spark to analyze the patient’s information based on their past health record in order to predict future health complexities. It is also used to reduce the processing time of genomic data sequencing – bonus points!

Machine Learning and Apache Spark

Companies are reaping benefits by equating Apache Spark with ML algorithms. For example, Yahoo uses a combination of these two technologies to pick out new topics which the users would find interesting. Similarly, Netflix also uses Spark+ML for real-time streaming and suggesting better online recommendation to the users, based on their user history.

The Apache Spark library has a separate library dedicated to ML, known as MLib. It consists of algorithms for the functions of regression, collaborative filtering, regression, dimensionality reduction, clustering, etc.

Last Thoughts

No wonder, Apache Spark offers a very innovative, powerful API for ML applications. Widely used for predictive analytics, fraud detection and recommendation engines, Spark swear to make ML practically easier and smoother in operations.

Are you interested in Apache Spark Progamming training in Gurgaon? DexLab Analytics is the place to be! Their incredible Spark Core training and placement assistance is probably the best in town. So, what you waiting for?!

The blog has been sourced from ― www.analyticsindiamag.com/how-apache-spark-became-essential-for-machine-learning

Interested in a career in Data Analyst?

To learn more about Data Analyst with Advanced excel course – Enrol Now.

To learn more about Data Analyst with R Course – Enrol Now.

To learn more about Big Data Course – Enrol Now.To learn more about Machine Learning Using Python and Spark – Enrol Now.

To learn more about Data Analyst with SAS Course – Enrol Now.

To learn more about Data Analyst with Apache Spark Course – Enrol Now.

To learn more about Data Analyst with Market Risk Analytics and Modelling Course – Enrol Now.

Apache Spark, Apache Spark Certification, Apache Spark Training, Apache Spark training center, Apache Spark Training Institute